AI Stack Framework

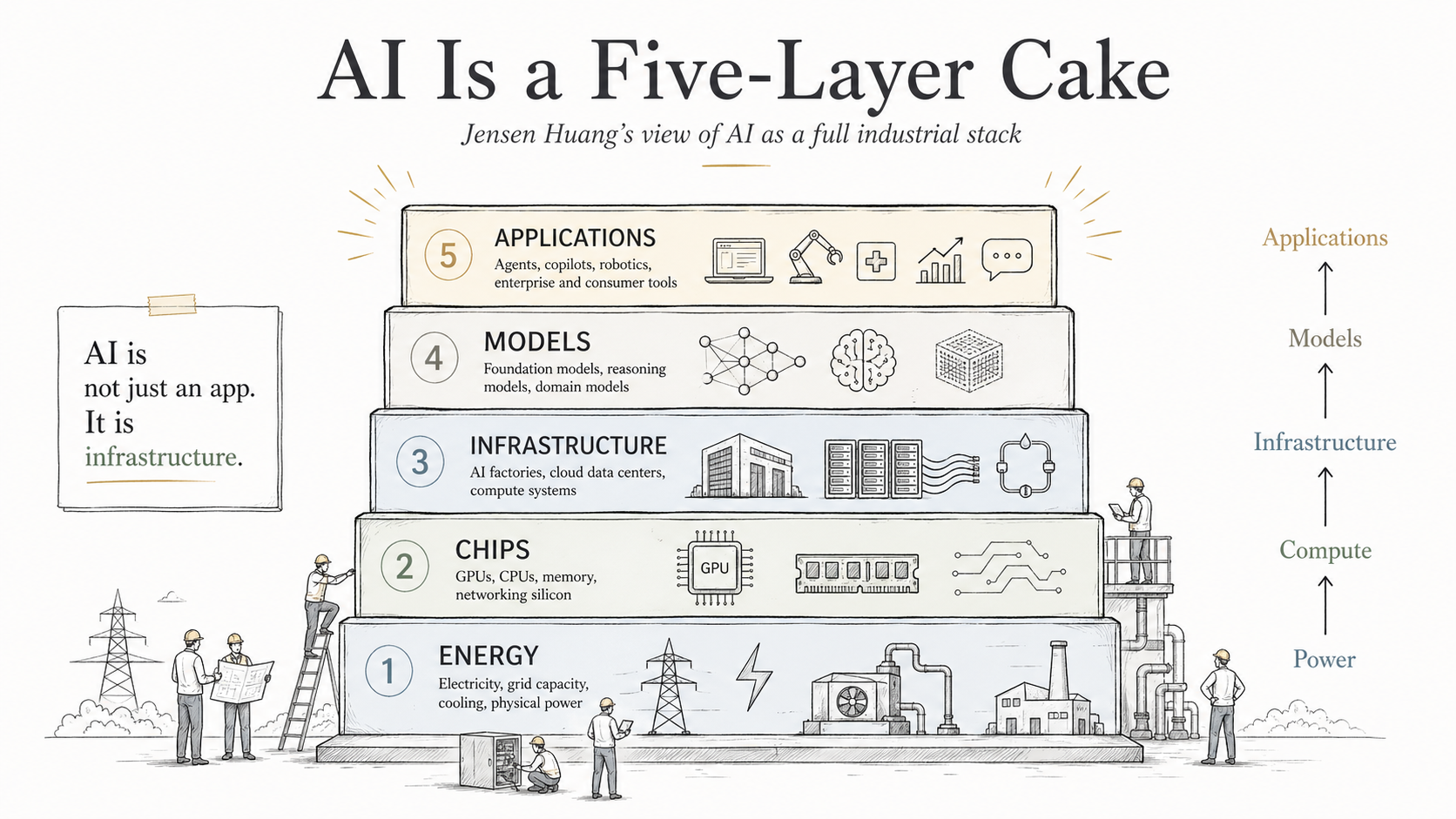

Jensen Huang's five-layer framework for understanding where value accumulates across the AI technology stack, from energy to applications.

Jensen Huang's five-layer framework provides a structured way to think about where value accumulates in the AI economy. Each layer has distinct investment characteristics, competitive dynamics, and risk profiles.

The Five Layers

1. Energy

Powers data centres. These are cyclical commodity businesses with limited technology differentiation. Energy companies benefit from rising AI demand but lack the pricing power and moat depth that characterise quality investments. Not a natural quality investment.

2. Chips

GPUs serve as the primary compute unit for AI training and inference. Nvidia's dominance at this layer is deep and self-reinforcing through the CUDA software ecosystem. The combination of hardware performance leadership and software lock-in creates a durable competitive position.

3. Infrastructure

Hyperscalers (AWS, Azure, Google Cloud) are investing heavily in data centres, compute capacity, networking, and software stacks. Their AI capital expenditure is not reckless spending — it is a signal about where economic value will be generated. These companies have the balance sheets and existing customer relationships to monetise AI at scale.

4. Models

Companies building frontier AI models (OpenAI, Anthropic, xAI) sit at this layer. Most are private. For investors, exposure is gained indirectly through the infrastructure layer that provides the compute these models depend on.

5. Applications

Too broad to generalise. Some AI applications will generate extraordinary value; others will not. Each must be evaluated on its own merits.

Understanding which layer captures value — and which layers are investable — is the starting point for AI investing. The framework helps avoid the trap of treating "AI" as a single trade.

The chips and infrastructure layers offer the clearest combination of investability, competitive moats, and direct exposure to AI growth. The energy layer lacks differentiation, the models layer is mostly private, and the applications layer requires case-by-case analysis.

Related

- The Chips Layer — Deep dive into GPU dominance and the CUDA ecosystem

- The Infrastructure Layer — How hyperscalers are building the substrate of the AI economy

- The Applications Layer — Evaluating individual AI application companies